How to get bigger, quicker wins by optimizing your testing workflow

(By the way, to get articles like this free in your inbox, subscribe to our newsletter.)

To grow quickly, you need to implement quickly, so our work with clients goes beyond suggesting what they should test; we build their in-house capability to “get stuff done.” This article describes a framework for speeding up your testing—so you can grow your profits quicker.

Many small changes or one big one?

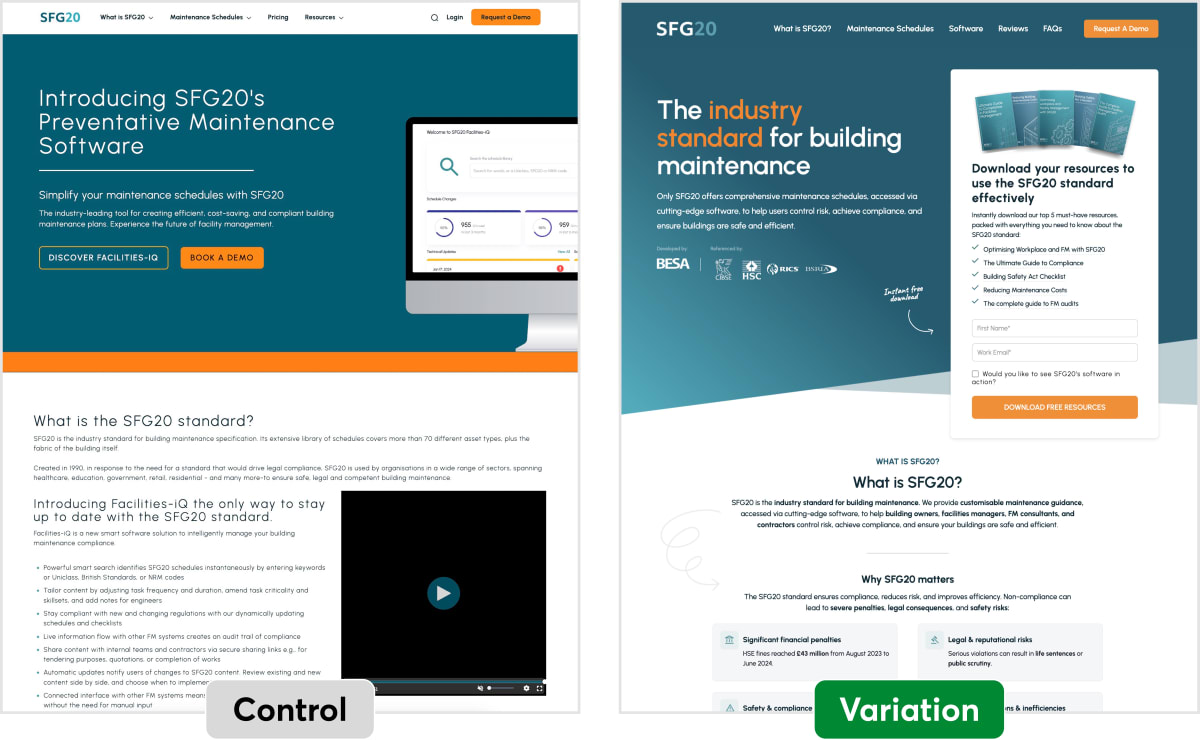

If you’ve read our Win Report, A year’s worth of PPC leads in just three weeks, you may recall that our winning landing page was very different from (and much more effective than) the control:

You might wonder whether we arrived at the final winning version via a series of smaller A/B tests, or whether we had simply tested the new page against the old one.

We had done the latter.

But we wouldn’t always do that.

How much should you incorporate into each A/B test? At one extreme, you could test every pixel change. At the other extreme, you could throw your whole year’s worth of ideas into one test. The ideal lies somewhere in between. But where? Here are some of the issues that our consultants consider when deciding how many changes to include in a single A/B test. These points should help you to decide which approach is best for you.

(Note that this question applies to multivariate tests as well as to A/B/n tests. In both cases, you’re faced with the question of how much to change in each page element.)

Why people do—and don’t—run tests

First, consider the following chart, which shows the main reasons why people do—and don’t—run A/B tests:

The reason to run an A/B test (represented by the green arrow) is to learn how a particular change affects conversion.

However, there are two drawbacks (represented by the grey arrows): (i) each test costs money and takes time to implement, and (ii) each test takes time to run.

In practice, each forthcoming test can feel like a departing bus. Ideally, you would put each change onto its own bus. However, buses may not come as often as you’d like, so it can be wise to squeeze in several changes, rather than waiting for the next one to come along. In the bus analogy, the grey arrows represent the cost of each bus. The green arrow represents how important it is for each change to have its own bus.

When should you give each change its own A/B test?

You may want to A/B test every small change if:

- The green arrow is long: In other words, you have a strong desire to learn how each change affects conversion, maybe for one of the following reasons:

- Because the change is expensive. For example, if you’re about to offer a bold guarantee, if you’re changing the price, or if you’re about to start giving away a premium (a free gift), you need to know how successful the change is, so you can work out if it’s cost-effective.

- Because the stakes are high. For example, you may be planning to implement this particular change on other sites, on other pages, or in other media (e.g., in offline advertising), so a bad decision would be costly.

- You’re testing changes that you aren’t confident will be effective, so you need to rely on an A/B test to tell you whether the changes are effective. This is fair enough. Most marketers are overconfident in their ability to spot a winner. A/B testing brings them down to earth with a bump.

- The upper, grey arrow is short: The time and cost of implementing a test is low.

- The lower, grey arrow is short: The time for a test to reach significance is low, perhaps because (i) the page gets a lot of traffic, so tests reach significance quickly, (ii) because you are testing changes that greatly outperform the control.

When should you include many changes in a single A/B test?

You may prefer to include many changes in one A/B test if:

- The green arrow is short. In other words, you’re okay not knowing how each individual change affects conversion.

- This may be because you’re testing many changes, and you’d be happy as long as the overall conversion rate increases.

- Or it may be because you’re highly confident that your changes will be effective. For example, you may be fixing things that are broken, or you’ve run A/B usability tests on them (see example).

- The grey arrows are long. This can be for several reasons. For example, because

- It takes you a lot of time and effort to get a test implemented. This can happen if (i) your workflows for creating content are inefficient, (ii) your company’s approval process is bureaucratic. Sometimes, “corporate brand police,” regulatory bodies, and IT departments feel like goalkeepers who were put there to stop you from scoring, (iii) your development resources are inadequate, or (iv) your software and technology is poor or poorly integrated. Clients often ask us to help them improve these aspects of their business, aware that it makes so much difference to their overall success.

- Your changes are intertwined, so it would be fiddly or impossible to separate them into separate tests or to run them as a multivariate test.

- The page has few visitors, so a small improvement would take months to be detected (i.e., reach statistical significance). Multivariate testing (MVT) allows you to overcome this problem by carrying out several A/B tests simultaneously on the same page. However, it usually takes more work to set up a multivariate test than a straightforward A/B/n test.

- You have an abundance of good, research-driven ideas to test, and implementation has become the bottleneck. There’s simply not enough time to implement and run each idea as a separate A/B test. Also, this has an opportunity cost: While a profitable idea sits on your to-do list, you effectively lose money every day until it’s implemented.

How to use these insights to grow your business faster

- Consider whether you might progress faster by including more—or fewer—changes into each test.

- Try to identify the bottleneck in your testing process—then remove it. For example,

- If you’re short of good test ideas, find ways of generating more of them. (This article and this one should help.)

- If you’re limited by the rate at which you can design and implement tests, look for ways of speeding things up. You probably find that certain types of tests are easier to implement than others. In particular, avoid tests that require new page layouts, complex code changes, or upstream approval.

- If your page doesn’t have enough traffic, look for ways of getting more. For example, if you have many landing pages, consider whether you’d benefit by sending more traffic to one page, on which you can then run much quicker A/B tests.

The bottom line

The right answer to “how many changes per test?” isn’t fixed—it shifts depending on your traffic, your implementation costs, your confidence in the changes, and how much you need to understand why something worked, not just that it did.

What stays constant is this: the goal is to learn and implement as fast as possible. That means regularly asking yourself where the friction actually is. Is it a shortage of ideas? Slow development cycles? A bureaucratic approval chain? Low traffic forcing you to wait months for significance? Each bottleneck calls for a different fix—and until you identify the right one, you risk solving the wrong problem efficiently.

The companies that grow fastest aren’t necessarily the ones with the cleverest test ideas. They’re the ones who’ve built a machine for turning ideas into live tests, and live tests into decisions. Optimise that machine, and everything else speeds up with it.

How much did you like this article?

What’s your goal today?

1. Hire us to grow your company

We’ve generated hundreds of millions for our clients, using our unique CRE Methodology™. To discover how we can help grow your business:

- Read our case studies, client success stories, and video testimonials.

- Learn about us, and our unique values, beliefs and quirks.

- Visit our “Services” page to see the process by which we assess whether we’re a good fit for each other.

- Schedule your FREE website strategy session with one of our renowned experts.

Schedule your FREE strategy session

2. Learn how to do conversion

Download a free copy of our Amazon #1 best-selling book, Making Websites Win, recommended by Google, Facebook, Microsoft, Moz, Econsultancy, and many more industry leaders. You’ll also be subscribed to our email newsletter and notified whenever we publish new articles or have something interesting to share.

Browse hundreds of articles, containing an amazing number of useful tools and techniques. Many readers tell us they have doubled their sales by following the advice in these articles.

Download a free copy of our best-selling book

3. Join our team

If you want to join our team—or discover why our team members love working with us—then see our “Careers” page.

4. Contact us

We help businesses worldwide, so get in touch!

© 2026 Conversion Rate Experts Limited. All rights reserved.

A Brandwidth Group Company.